Serverless Geoportal: where is the data?

This is a follow up to my earlier post discussing the design of a Geoportal for Provita. In that post, I mentioned that we use the serverless JAMStack approach, with no servers and no databases to monitor and maintain.

So, “without” servers or databases, how are we organizing and managing the data of the site?

In this post, I answer the question by describing the data organization and the storage aspects of the Geoportal.

Data categories

Site data are organized in the following four categories: site content, uploaded Geographical Information System (GIS) data sets, generated GIS tiles, and user content.

Site content

Site content data is used by Gridsome, the site generator, to pre-render the site’s static pages at build time.

The data in this category are stored in JSON and markdown formats, and organized by data type. Data for each type are loaded at build time using Gridsome file loaders combined with Markdown and JSON transformers and made available to the pre-render functionality as an in-memory database accessible via GraphQL queries.

The following data types are defined in the site content category:

| Type | Description | Format | Gridsome Type Name |

|---|---|---|---|

| GIS Metadata | Describes the content of uploaded GIS files (e.g., description, keywords, date) | JSON Embedded markdown |

MetaData |

| About | Text and images of the “About” page | Markdown JPG & PNG Images |

AboutData |

| Contact | Contact information | JSON | ContactData |

| FAQ | Questions and answers presented in the FAQ page | JSON Embedded markdown |

FAQData |

| News | News stories text and images | JSON JPG & PNG Images |

NewsData |

| User Survey Template | Definition of fields to capture in the user survey | JSON | UserSurveyTemplate |

The data in this category are stored in a dedicated Github repository (geoportal-data), separate from the main repository, but referenced as a Git submodule. The submodule is updated every time the site is generated via the “build” command, which is triggered by the “publish” button on the Admin page.

The Github repository used to hold the site content data is public, but it could be made private if desired. However, since all of the data stored on this repository are published onto a publicly accessible web site, there is no benefit in making the repository private. It is important to note that Admin users must be defined as “collaborators” in this repository to be able to update the data.

One big advantage of storing the content data in Github, is that all changes are tracked and recorded. So if necessary, it is really easy to audit the data to figure out who made changes and it would not be too hard to revert changes, if desired.

Image credit My Octocat

An additional benefit of using Github is that we can easily leverage Github identities. This, in combination with the repository “collaborator” concept, allows us to implement authentication and authorization capabilities in the Admin module. All without having to build our own identity sub‑system!

Geoportal authorization dialog

Uploaded GIS data sets

These are the actual GIS files published on the Geoportal as .ZIP archive files. Two types of files stored inside the .ZIP archives are supported: vector files which use the Shapefile format and raster files which use the GeoTIFF format.

These files are stored using the Amazon Web Services (AWS) Simple Storage Service (S3), and they are configured with public-read access.

AWS S3

In order to upload these files, Admin users must be defined as “collaborators” in the geoportal-data Github repository. Since this is not a capability that exists in AWS, the rule is enforced by a Lambda function (Netlify function).

For better performance, files are uploaded directly to AWS S3 from the Admin user’s browser using AWS S3’s Presigned Post links.

We are using AWS S3 instead of Github for these files because: 1) there are no file size limitations, and 2) uploads can be initiated directly from the Admin user’s browser, without any intermediaries and their associated overhead.

Generated GIS tiles

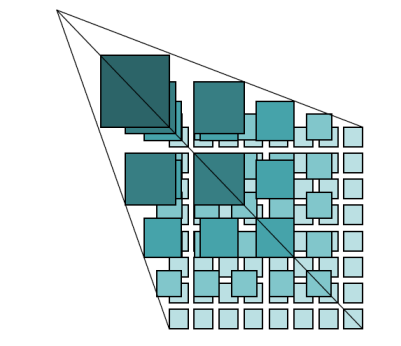

These files are used by the site’s interactive map data set pre-view functionality, using standard map tiling schemes.

Image credit: OGC

The files are generated using AWS Batch jobs which are triggered automatically by the site’s Admin function. They are stored in AWS S3, have public-read access, and as a side benefit, can be accessed by any GIS user using a standard map tiling scheme url.

Vector tiles are stored in compressed (gzip) PBF format. Raster tiles are stored in PNG format.

Pre-generating map tiles is analogous to pre-rendering the site itself: instead of using a GIS server to generate (and cache) tiles on the fly, we generate all the tiles once (and whenever GIS files are replaced) and deploy them as static files.

This data category is best stored in AWS S3 versus Github, because it is comprised of thousands of small files which do not need to be tracked for changes. Therefore, the associated Github performance overhead would not be justified.

User content

When end users fill out and submit the download survey, the data is saved in JSON format in AWS S3. These files can only be read by Admin users which are defined as “collaborators” in the geoportal-data Github repository. As with uploaded GIS data sets, this rule is enforced by a Lambda function (Netlify function).

Storing user content in AWS S3 has the advantage of simplicity for implementing a public-write, private-read policy without the need of requiring any kind of identify or credentials from end-users. It works well in our use case, where the data consist of anonymous survey submissions.

Summary

As a handy reference, here is a quick summary of the discussion above.

| Category | Data types | Format | Access | Storage Platform |

|---|---|---|---|---|

| Site content |

|

|

Public-read Admin-write |

Github |

| Uploaded GIS data sets |

|

|

Public-read Admin-write |

AWS S3 |

| Generated GIS tiles |

|

|

Public-read Admin‑write |

AWS S3 |

| User content |

|

|

Public-write Admin-read |

AWS S3 |

I hope this post provides valuable insights into how the data is managed in the Provita Geoportal.

In upcoming posts I will continue to dig into various implementation details of the site.

Au revoir !